HI! MY NAME IS ANDER

VISUALS • 3D • INTERACTION

About Me

I’m currently in my third semester of communication design, still very much in the process of finding my creative direction. I’m driven by curiosity and love messing around with different technologies, trying new tools, learning by doing and seeing where experiments lead. Lately, I’ve been especially drawn to interactive media and 3D, working mainly with Blender and TouchDesigner, while also slowly diving into programming and discovering its creative possibilities.

PROJECTS

This project was created using TouchDesigner, a real-time visual programming environment for interactive graphics, together with MediaPipe, an open-source framework from GitHub that enables hand tracking and other computer-vision features.

I used MediaPipe to track hand movements, which are then translated into real-time visual changes in TouchDesigner, allowing touch-free interaction between the body and the digital space.

This project prototype explores interactive sound and visuals using physical sensors. A light sensor and a color sensor connected to an Arduino capture environmental data, which is sent to TouchDesigner to control the visual output in real time.

TouchDesigner also transmits these sensor values to VCV Rack 2 (a virtual modular synthesizer) via OSC, allowing the same inputs to shape the sound simultaneously. This - in theory - creates an instrument that can be played through movement and light, enabling interaction with both sound and visuals without physical contact.

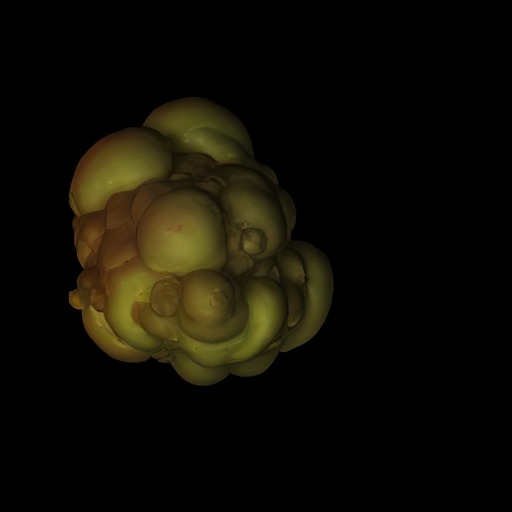

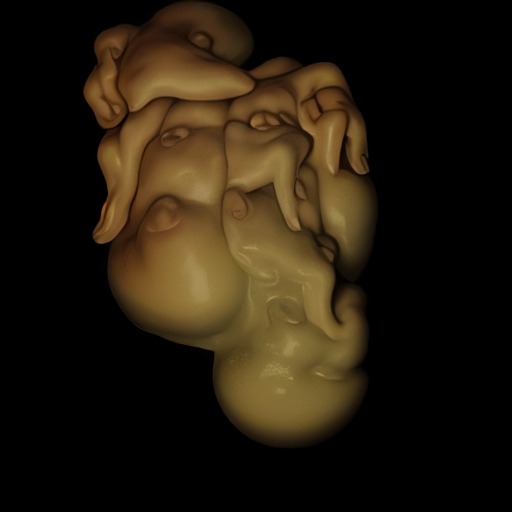

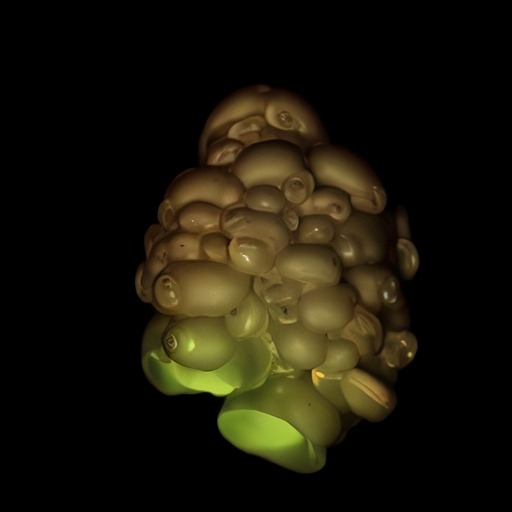

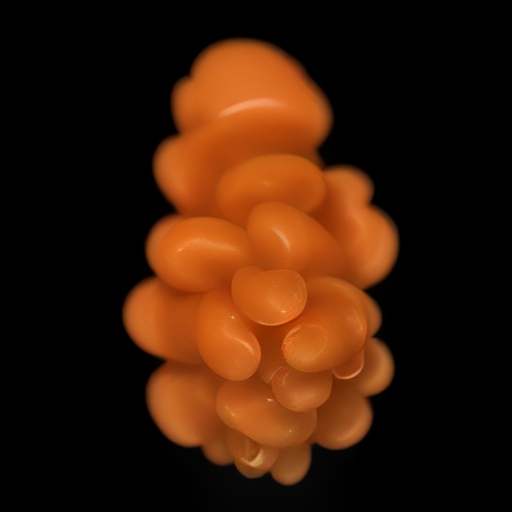

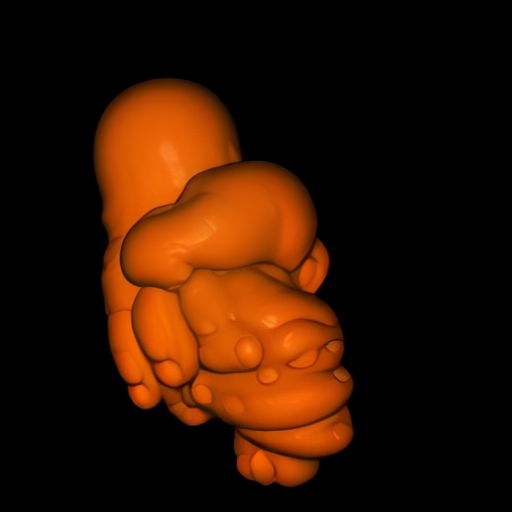

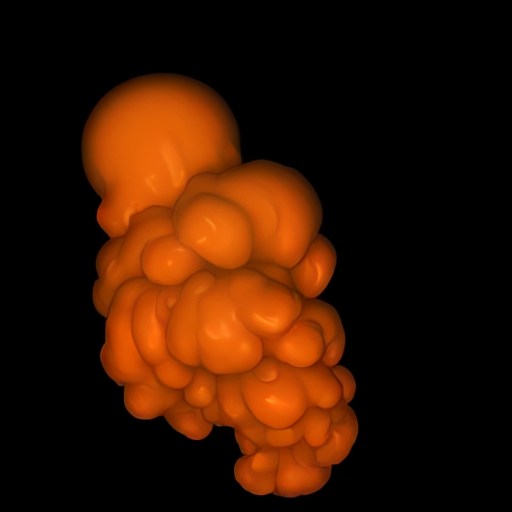

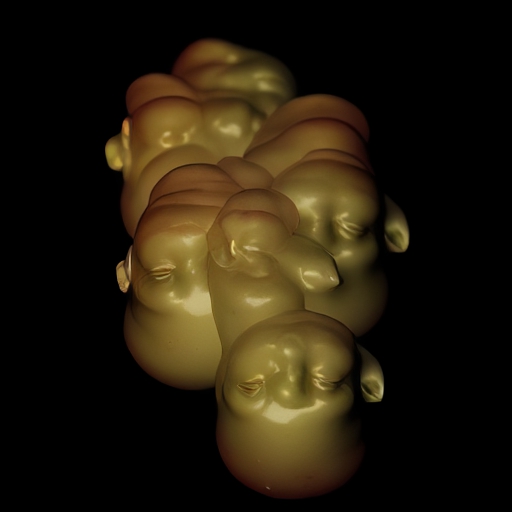

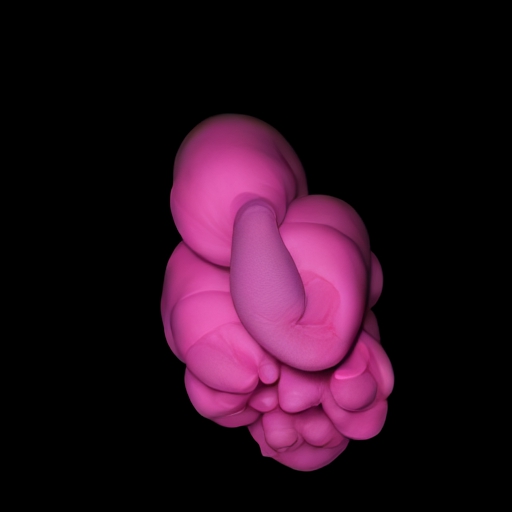

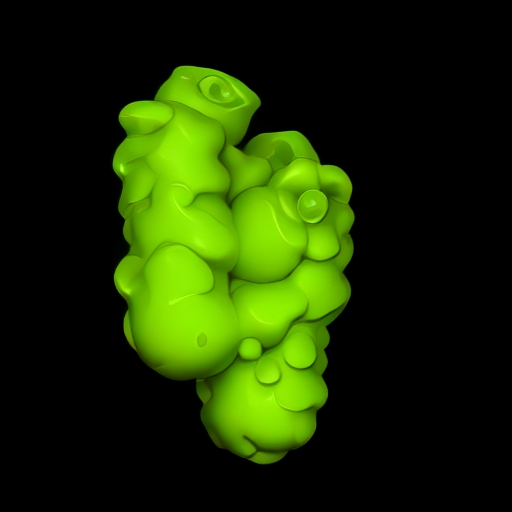

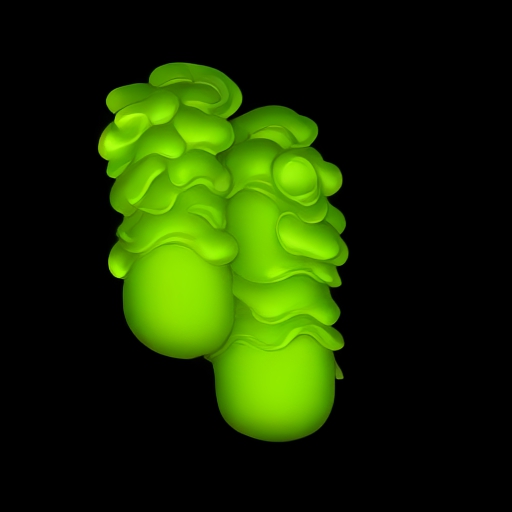

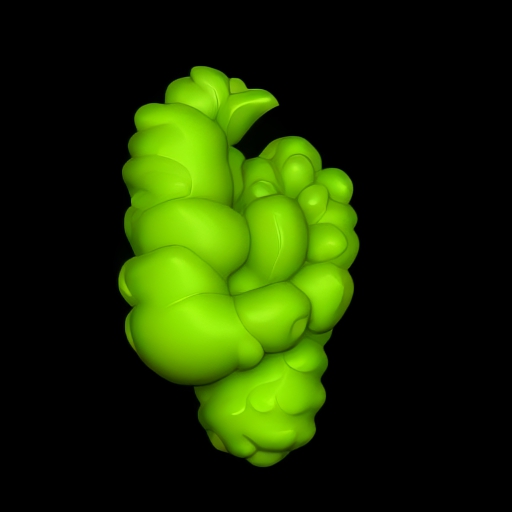

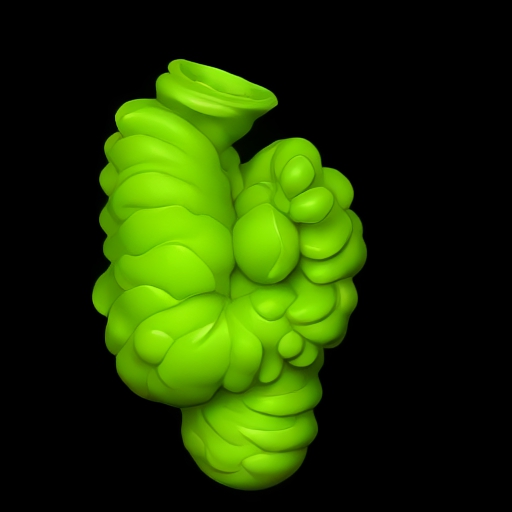

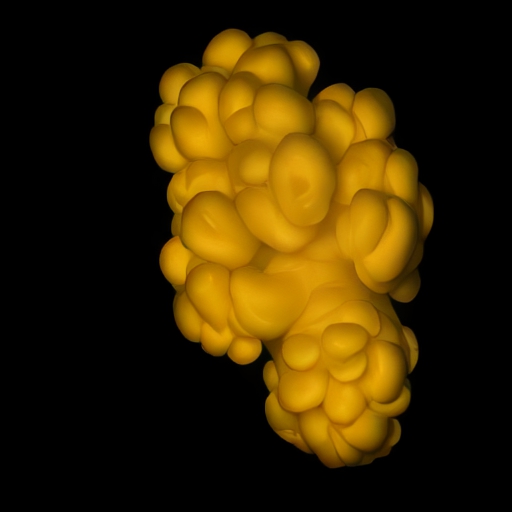

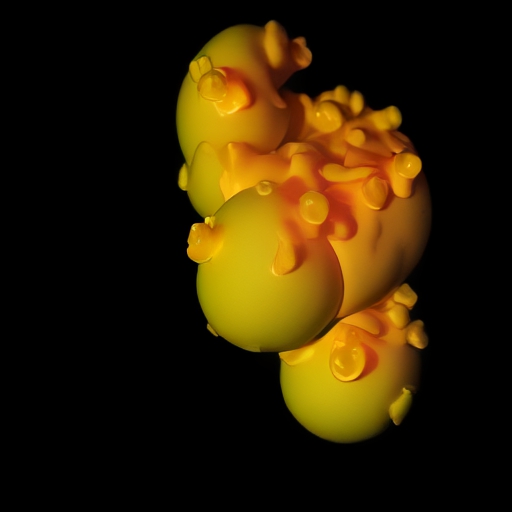

This project explores real-time interaction between a 3D object and AI-generated visuals. The 3D model was created in Blender and imported into TouchDesigner, where a custom controller allows the user to rotate, move, and scale the object.

The visuals are generated using StreamDiffusion, an AI plugin by dotsimulate, that continuously transforms images in real time. Along with controlling the object, the controller also allows the user to adjust the level of AI “hallucination,” influencing how strongly StreamDiffusion alters the input.

Every interaction produces a new visual result. The images shown below are single renders captured from these live outputs.

The idea of this project was to create oscilloscope music/art, inspired by the YouTube channel Jorebeam Fenderson. Oscilloscope music is a form of audiovisual art where sound is shaped to create specific images on an oscilloscope, effectively allowing images to be “drawn” using audio signals.

I created this project as part of a sound design class, which was for learning VCV Rack 2, a virtual modular synthesizer.

I created this audioreactive visual in Touchdesigner. I created the audio by generating a melody with udio and then editing it in Ableton Live 12 Lite, a digital audio workstation used for composing and editing music.

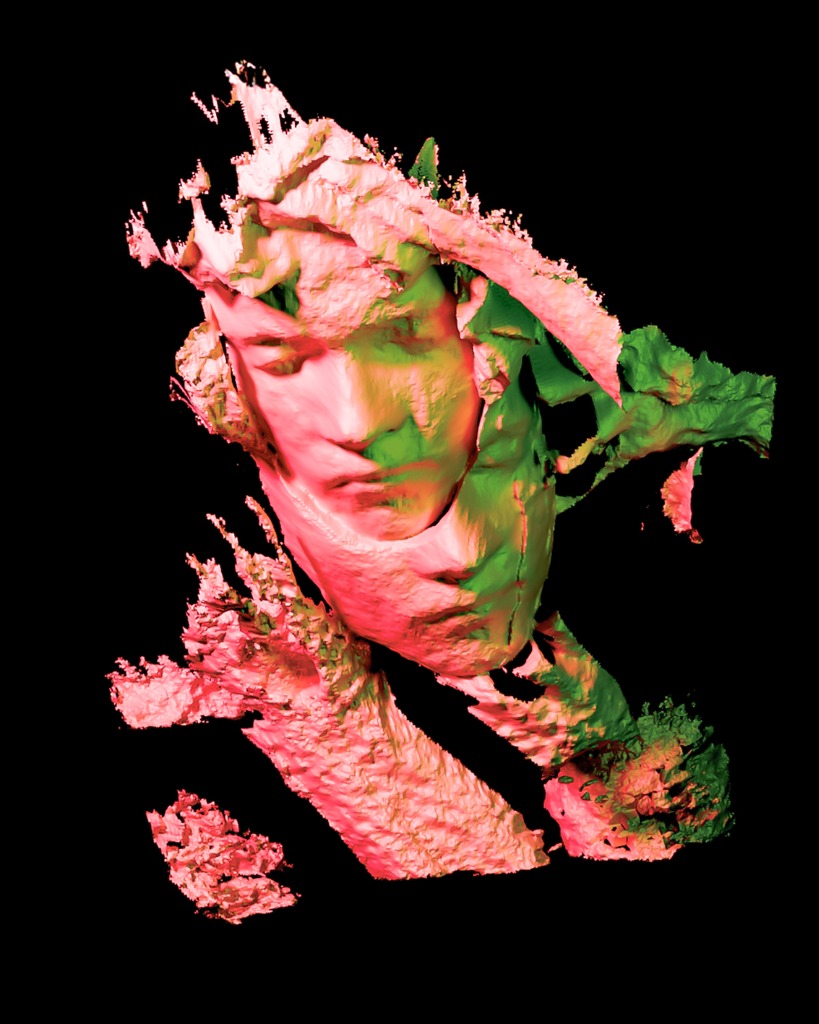

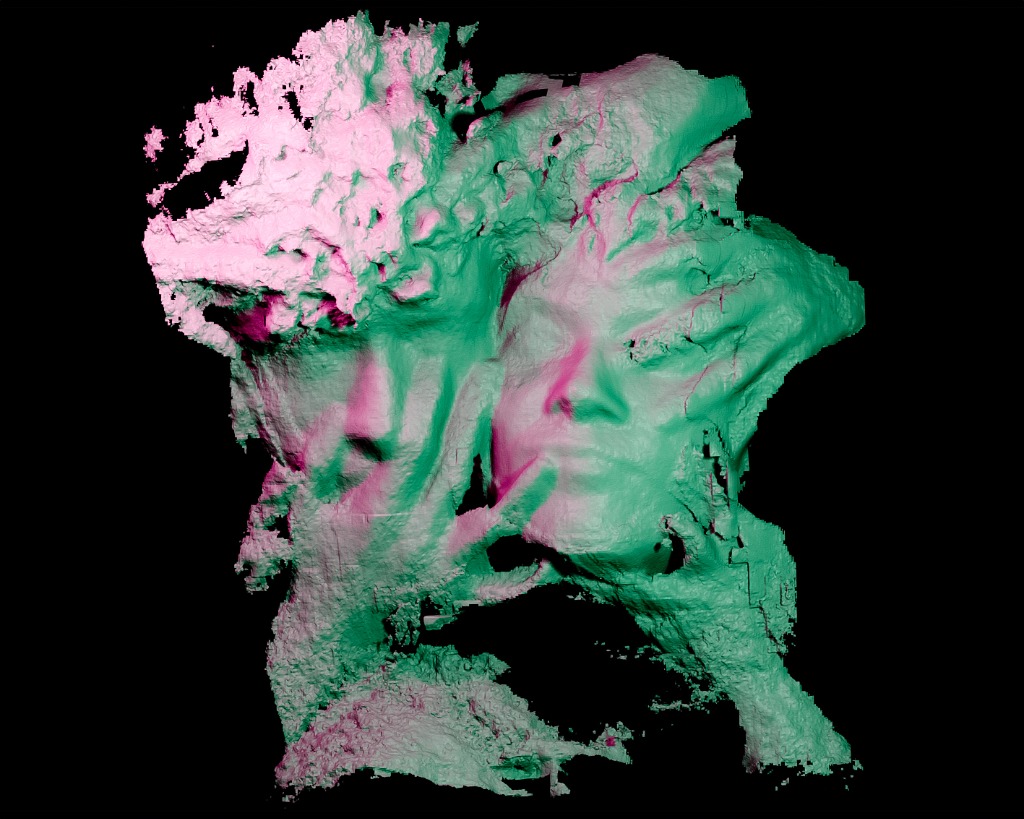

I created these scans using the Heges app for 3D scanning, then I further edited and refined them in Blender and Photoshop to achieve the final aesthetic.

Sometimes I like to draw as well. These were made with pencils, then scanned and edited in Photoshop.

INFO / CONTACT

Ander Wart

Hochschule Düsseldorf

University of Applied Sciences

Communication Design

Third (Bachelor)